https://github.com/apache/spark

- HEAD

- refs/heads/branch-0.5

- refs/heads/branch-0.6

- refs/heads/branch-0.7

- refs/heads/branch-0.8

- refs/heads/branch-0.9

- refs/heads/branch-1.0

- refs/heads/branch-1.0-jdbc

- refs/heads/branch-1.1

- refs/heads/branch-1.2

- refs/heads/branch-1.3

- refs/heads/branch-1.4

- refs/heads/branch-1.5

- refs/heads/branch-1.6

- refs/heads/branch-2.0

- refs/heads/branch-2.1

- refs/heads/branch-2.2

- refs/heads/branch-2.3

- refs/heads/branch-2.4

- refs/heads/branch-3.0

- refs/heads/branch-3.1

- refs/heads/branch-3.2

- refs/heads/branch-3.3

- refs/heads/branch-3.4

- refs/heads/branch-3.5

- refs/heads/master

- refs/remotes/origin/branch-0.8

- refs/remotes/origin/td-rdd-save

- refs/tags/0.3-scala-2.8

- refs/tags/0.3-scala-2.9

- refs/tags/2.0.0-preview

- refs/tags/alpha-0.1

- refs/tags/alpha-0.2

- refs/tags/v0.5.0

- refs/tags/v0.5.1

- refs/tags/v0.5.2

- refs/tags/v0.6.0

- refs/tags/v0.6.0-yarn

- refs/tags/v0.6.1

- refs/tags/v0.6.2

- refs/tags/v0.7.0

- refs/tags/v0.7.0-bizo-1

- refs/tags/v0.7.1

- refs/tags/v0.7.2

- refs/tags/v0.9.1

- refs/tags/v0.9.2

- refs/tags/v1.0.0

- refs/tags/v1.0.1

- refs/tags/v1.0.2

- refs/tags/v1.1.0

- refs/tags/v1.1.1

- refs/tags/v1.2.0

- refs/tags/v1.2.1

- refs/tags/v1.2.2

- refs/tags/v1.3.0

- refs/tags/v1.3.1

- refs/tags/v1.4.0

- refs/tags/v1.4.1

- refs/tags/v1.5.0-rc1

- refs/tags/v1.5.0-rc2

- refs/tags/v1.5.0-rc3

- refs/tags/v1.5.1

- refs/tags/v1.6.0

- refs/tags/v1.6.1

- refs/tags/v1.6.2

- refs/tags/v1.6.3

- refs/tags/v2.0.0

- refs/tags/v2.0.1

- refs/tags/v2.0.2

- refs/tags/v2.1.0

- refs/tags/v2.1.1

- refs/tags/v2.1.2

- refs/tags/v2.1.2-rc1

- refs/tags/v2.1.2-rc2

- refs/tags/v2.1.2-rc3

- refs/tags/v2.1.2-rc4

- refs/tags/v2.1.3

- refs/tags/v2.1.3-rc1

- refs/tags/v2.1.3-rc2

- refs/tags/v2.2.0

- refs/tags/v2.2.1

- refs/tags/v2.2.1-rc1

- refs/tags/v2.2.1-rc2

- refs/tags/v2.2.2

- refs/tags/v2.2.2-rc1

- refs/tags/v2.2.2-rc2

- refs/tags/v2.2.3

- refs/tags/v2.2.3-rc1

- refs/tags/v2.3.0

- refs/tags/v2.3.0-rc1

- refs/tags/v2.3.0-rc2

- refs/tags/v2.3.0-rc3

- refs/tags/v2.3.0-rc4

- refs/tags/v2.3.1

- refs/tags/v2.3.1-rc1

- refs/tags/v2.3.1-rc2

- refs/tags/v2.3.1-rc3

- refs/tags/v2.3.1-rc4

- refs/tags/v2.3.2

- refs/tags/v2.3.2-rc1

- refs/tags/v2.3.2-rc2

- refs/tags/v2.3.2-rc3

- refs/tags/v2.3.2-rc4

- refs/tags/v2.3.2-rc5

- refs/tags/v2.3.2-rc6

- refs/tags/v2.3.3

- refs/tags/v2.3.3-rc1

- refs/tags/v2.3.3-rc2

- refs/tags/v2.3.4

- refs/tags/v2.3.4-rc1

- refs/tags/v2.4.0

- refs/tags/v2.4.0-rc1

- refs/tags/v2.4.0-rc2

- refs/tags/v2.4.0-rc3

- refs/tags/v2.4.0-rc4

- refs/tags/v2.4.0-rc5

- refs/tags/v2.4.1

- refs/tags/v2.4.1-rc1

- refs/tags/v2.4.1-rc2

- refs/tags/v2.4.1-rc3

- refs/tags/v2.4.1-rc4

- refs/tags/v2.4.1-rc5

- refs/tags/v2.4.1-rc6

- refs/tags/v2.4.1-rc7

- refs/tags/v2.4.1-rc8

- refs/tags/v2.4.1-rc9

- refs/tags/v2.4.2

- refs/tags/v2.4.2-rc1

- refs/tags/v2.4.3

- refs/tags/v2.4.3-rc1

- refs/tags/v2.4.4

- refs/tags/v2.4.4-rc1

- refs/tags/v2.4.4-rc2

- refs/tags/v2.4.4-rc3

- refs/tags/v2.4.5

- refs/tags/v2.4.5-rc1

- refs/tags/v2.4.5-rc2

- refs/tags/v2.4.6

- refs/tags/v2.4.6-rc1

- refs/tags/v2.4.6-rc2

- refs/tags/v2.4.6-rc3

- refs/tags/v2.4.6-rc4

- refs/tags/v2.4.6-rc5

- refs/tags/v2.4.6-rc6

- refs/tags/v2.4.6-rc7

- refs/tags/v2.4.6-rc8

- refs/tags/v2.4.7

- refs/tags/v2.4.7-rc1

- refs/tags/v2.4.7-rc2

- refs/tags/v2.4.7-rc3

- refs/tags/v2.4.8

- refs/tags/v2.4.8-rc1

- refs/tags/v2.4.8-rc2

- refs/tags/v2.4.8-rc3

- refs/tags/v2.4.8-rc4

- refs/tags/v3.0.0

- refs/tags/v3.0.0-preview2

- refs/tags/v3.0.0-preview2-rc1

- refs/tags/v3.0.0-preview2-rc2

- refs/tags/v3.0.0-rc1

- refs/tags/v3.0.0-rc2

- refs/tags/v3.0.0-rc3

- refs/tags/v3.0.1

- refs/tags/v3.0.1-rc1

- refs/tags/v3.0.1-rc2

- refs/tags/v3.0.1-rc3

- refs/tags/v3.0.2

- refs/tags/v3.0.2-rc1

- refs/tags/v3.0.3

- refs/tags/v3.0.3-rc1

- refs/tags/v3.1.0-rc1

- refs/tags/v3.1.1

- refs/tags/v3.1.1-rc1

- refs/tags/v3.1.1-rc2

- refs/tags/v3.1.1-rc3

- refs/tags/v3.1.2

- refs/tags/v3.1.2-rc1

- refs/tags/v3.1.3

- refs/tags/v3.1.3-rc1

- refs/tags/v3.1.3-rc2

- refs/tags/v3.1.3-rc3

- refs/tags/v3.1.3-rc4

- refs/tags/v3.2.0

- refs/tags/v3.2.0-rc1

- refs/tags/v3.2.0-rc2

- refs/tags/v3.2.0-rc3

- refs/tags/v3.2.0-rc4

- refs/tags/v3.2.0-rc5

- refs/tags/v3.2.0-rc6

- refs/tags/v3.2.0-rc7

- refs/tags/v3.2.1

- refs/tags/v3.2.1-rc1

- refs/tags/v3.2.1-rc2

- refs/tags/v3.2.2

- refs/tags/v3.2.2-rc1

- refs/tags/v3.2.3

- refs/tags/v3.2.3-rc1

- refs/tags/v3.2.4

- refs/tags/v3.2.4-rc1

- refs/tags/v3.3.0

- refs/tags/v3.3.0-rc1

- refs/tags/v3.3.0-rc2

- refs/tags/v3.3.0-rc3

- refs/tags/v3.3.0-rc4

- refs/tags/v3.3.0-rc5

- refs/tags/v3.3.0-rc6

- refs/tags/v3.3.1

- refs/tags/v3.3.1-rc1

- refs/tags/v3.3.1-rc2

- refs/tags/v3.3.1-rc3

- refs/tags/v3.3.1-rc4

- refs/tags/v3.3.2

- refs/tags/v3.3.2-rc1

- refs/tags/v3.3.3

- refs/tags/v3.3.3-rc1

- refs/tags/v3.3.4

- refs/tags/v3.3.4-rc1

- refs/tags/v3.4.0

- refs/tags/v3.4.0-rc1

- refs/tags/v3.4.0-rc2

- refs/tags/v3.4.0-rc3

- refs/tags/v3.4.0-rc4

- refs/tags/v3.4.0-rc5

- refs/tags/v3.4.0-rc6

- refs/tags/v3.4.0-rc7

- refs/tags/v3.4.1

- refs/tags/v3.4.1-rc1

- refs/tags/v3.4.2

- refs/tags/v3.4.2-rc1

- refs/tags/v3.4.3

- refs/tags/v3.4.3-rc1

- refs/tags/v3.4.3-rc2

- refs/tags/v3.5.0

- refs/tags/v3.5.0-rc1

- refs/tags/v3.5.0-rc2

- refs/tags/v3.5.0-rc3

- refs/tags/v3.5.0-rc4

- refs/tags/v3.5.0-rc5

- refs/tags/v3.5.1

- refs/tags/v3.5.1-rc1

- refs/tags/v3.5.1-rc2

- refs/tags/v4.0.0-preview1

- refs/tags/v4.0.0-preview1-rc1

- refs/tags/v4.0.0-preview1-rc2

- refs/tags/v4.0.0-preview1-rc3

Take a new snapshot of a software origin

If the archived software origin currently browsed is not synchronized with its upstream version (for instance when new commits have been issued), you can explicitly request Software Heritage to take a new snapshot of it.

Use the form below to proceed. Once a request has been submitted and accepted, it will be processed as soon as possible. You can then check its processing state by visiting this dedicated page.

Processing "take a new snapshot" request ...

Permalinks

To reference or cite the objects present in the Software Heritage archive, permalinks based on SoftWare Hash IDentifiers (SWHIDs) must be used.

Select below a type of object currently browsed in order to display its associated SWHID and permalink.

| Revision | Author | Date | Message | Commit Date |

|---|---|---|---|---|

| 8e4a99b | Wenchen Fan | 10 October 2018, 13:26:12 UTC | Preparing Spark release v2.4.0-rc3 | 10 October 2018, 13:26:12 UTC |

| 404c840 | Maxim Gekk | 09 October 2018, 06:35:00 UTC | [SPARK-25669][SQL] Check CSV header only when it exists ## What changes were proposed in this pull request? Currently the first row of dataset of CSV strings is compared to field names of user specified or inferred schema independently of presence of CSV header. It causes false-positive error messages. For example, parsing `"1,2"` outputs the error: ```java java.lang.IllegalArgumentException: CSV header does not conform to the schema. Header: 1, 2 Schema: _c0, _c1 Expected: _c0 but found: 1 ``` In the PR, I propose: - Checking CSV header only when it exists - Filter header from the input dataset only if it exists ## How was this patch tested? Added a test to `CSVSuite` which reproduces the issue. Closes #22656 from MaxGekk/inferred-header-check. Authored-by: Maxim Gekk <maxim.gekk@databricks.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 46fe40838aa682a7073dd6f1373518b0c8498a94) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 09 October 2018, 06:36:33 UTC |

| 4baa4d4 | Tathagata Das | 08 October 2018, 21:32:04 UTC | [SPARK-25639][DOCS] Added docs for foreachBatch, python foreach and multiple watermarks ## What changes were proposed in this pull request? Added - Python foreach - Scala, Java and Python foreachBatch - Multiple watermark policy - The semantics of what changes are allowed to the streaming between restarts. ## How was this patch tested? No tests Closes #22627 from tdas/SPARK-25639. Authored-by: Tathagata Das <tathagata.das1565@gmail.com> Signed-off-by: Tathagata Das <tathagata.das1565@gmail.com> (cherry picked from commit f9935a3f85f46deef2cb7b213c1c02c8ff627a8c) Signed-off-by: Tathagata Das <tathagata.das1565@gmail.com> | 08 October 2018, 21:32:18 UTC |

| 193ce77 | shivusondur | 08 October 2018, 07:43:08 UTC | [SPARK-25677][DOC] spark.io.compression.codec = org.apache.spark.io.ZstdCompressionCodec throwing IllegalArgumentException Exception ## What changes were proposed in this pull request? Documentation is updated with proper classname org.apache.spark.io.ZStdCompressionCodec ## How was this patch tested? we used the spark.io.compression.codec = org.apache.spark.io.ZStdCompressionCodec and verified the logs. Closes #22669 from shivusondur/CompressionIssue. Authored-by: shivusondur <shivusondur@gmail.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 1a6815cd9f421a106f8d96a36a53042a00f02386) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 08 October 2018, 07:43:35 UTC |

| 692ddb3 | Liang-Chi Hsieh | 08 October 2018, 07:18:08 UTC | [SPARK-25591][PYSPARK][SQL] Avoid overwriting deserialized accumulator ## What changes were proposed in this pull request? If we use accumulators in more than one UDFs, it is possible to overwrite deserialized accumulators and its values. We should check if an accumulator was deserialized before overwriting it in accumulator registry. ## How was this patch tested? Added test. Closes #22635 from viirya/SPARK-25591. Authored-by: Liang-Chi Hsieh <viirya@gmail.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit cb90617f894fd51a092710271823ec7d1cd3a668) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 08 October 2018, 07:18:27 UTC |

| 4214ddd | hyukjinkwon | 08 October 2018, 07:07:06 UTC | [SPARK-25673][BUILD] Remove Travis CI which enables Java lint check ## What changes were proposed in this pull request? https://github.com/apache/spark/pull/12980 added Travis CI file mainly for linter because we disabled Java lint check in Jenkins. It's enabled as of https://github.com/apache/spark/pull/21399 and now SBT runs it. Looks we can now remove the file added before. ## How was this patch tested? N/A Closes #22665 Closes #22667 from HyukjinKwon/SPARK-25673. Authored-by: hyukjinkwon <gurwls223@apache.org> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 219922422003e59cc8b3bece60778536759fa669) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 08 October 2018, 07:07:35 UTC |

| c8b9409 | gatorsmile | 06 October 2018, 22:49:41 UTC | [SPARK-25671] Build external/spark-ganglia-lgpl in Jenkins Test ## What changes were proposed in this pull request? Currently, we do not build external/spark-ganglia-lgpl in Jenkins tests when the code is changed. ## How was this patch tested? N/A Closes #22658 from gatorsmile/buildGanglia. Authored-by: gatorsmile <gatorsmile@gmail.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit 8bb242902760535d12c6c40c5d8481a98fdc11e0) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 06 October 2018, 22:49:55 UTC |

| 48e2e6f | Dongjoon Hyun | 06 October 2018, 16:40:42 UTC | [SPARK-25644][SS][FOLLOWUP][BUILD] Fix Scala 2.12 build error due to foreachBatch ## What changes were proposed in this pull request? This PR fixes the Scala-2.12 build error due to ambiguity in `foreachBatch` test cases. - https://amplab.cs.berkeley.edu/jenkins/view/Spark%20QA%20Test%20(Dashboard)/job/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/428/console ```scala [error] /home/jenkins/workspace/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/sql/core/src/test/scala/org/apache/spark/sql/execution/streaming/sources/ForeachBatchSinkSuite.scala:102: ambiguous reference to overloaded definition, [error] both method foreachBatch in class DataStreamWriter of type (function: org.apache.spark.api.java.function.VoidFunction2[org.apache.spark.sql.Dataset[Int],Long])org.apache.spark.sql.streaming.DataStreamWriter[Int] [error] and method foreachBatch in class DataStreamWriter of type (function: (org.apache.spark.sql.Dataset[Int], Long) => Unit)org.apache.spark.sql.streaming.DataStreamWriter[Int] [error] match argument types ((org.apache.spark.sql.Dataset[Int], Any) => Unit) [error] ds.writeStream.foreachBatch((_, _) => {}).trigger(Trigger.Continuous("1 second")).start() [error] ^ [error] /home/jenkins/workspace/spark-master-test-maven-hadoop-2.7-ubuntu-scala-2.12/sql/core/src/test/scala/org/apache/spark/sql/execution/streaming/sources/ForeachBatchSinkSuite.scala:106: ambiguous reference to overloaded definition, [error] both method foreachBatch in class DataStreamWriter of type (function: org.apache.spark.api.java.function.VoidFunction2[org.apache.spark.sql.Dataset[Int],Long])org.apache.spark.sql.streaming.DataStreamWriter[Int] [error] and method foreachBatch in class DataStreamWriter of type (function: (org.apache.spark.sql.Dataset[Int], Long) => Unit)org.apache.spark.sql.streaming.DataStreamWriter[Int] [error] match argument types ((org.apache.spark.sql.Dataset[Int], Any) => Unit) [error] ds.writeStream.foreachBatch((_, _) => {}).partitionBy("value").start() [error] ^ ``` ## How was this patch tested? Manual. Since this failure occurs in Scala-2.12 profile and test cases, Jenkins will not test this. We need to build with Scala-2.12 and run the tests. Closes #22649 from dongjoon-hyun/SPARK-SCALA212. Authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit 9cbf105ab1256d65f027115ba5505842ce8fffe3) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 06 October 2018, 16:40:54 UTC |

| a2991d2 | Marcelo Vanzin | 06 October 2018, 04:15:16 UTC | [SPARK-25646][K8S] Fix docker-image-tool.sh on dev build. The docker file was referencing a path that only existed in the distribution tarball; it needs to be parameterized so that the right path can be used in a dev build. Tested on local dev build. Closes #22634 from vanzin/SPARK-25646. Authored-by: Marcelo Vanzin <vanzin@cloudera.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit 58287a39864db463eeef17d1152d664be021d9ef) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 06 October 2018, 04:18:12 UTC |

| 0a70afd | Shixiong Zhu | 05 October 2018, 17:45:15 UTC | [SPARK-25644][SS] Fix java foreachBatch in DataStreamWriter ## What changes were proposed in this pull request? The java `foreachBatch` API in `DataStreamWriter` should accept `java.lang.Long` rather `scala.Long`. ## How was this patch tested? New java test. Closes #22633 from zsxwing/fix-java-foreachbatch. Authored-by: Shixiong Zhu <zsxwing@gmail.com> Signed-off-by: Shixiong Zhu <zsxwing@gmail.com> | 05 October 2018, 18:18:49 UTC |

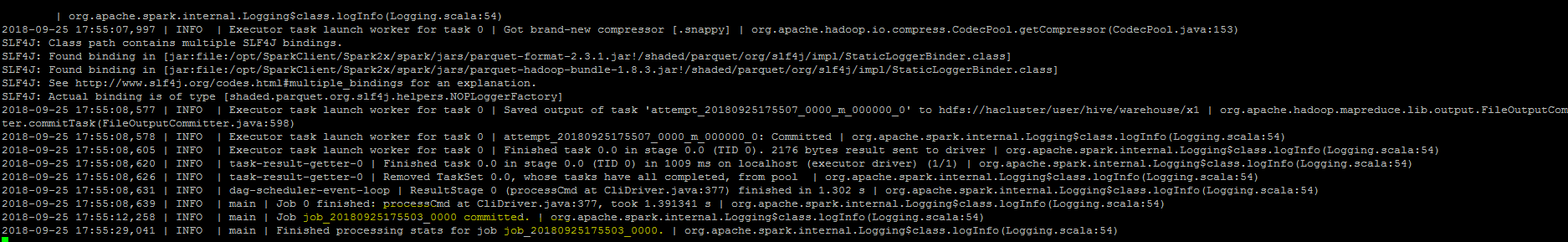

| 2c700ee | s71955 | 05 October 2018, 05:09:16 UTC | [SPARK-25521][SQL] Job id showing null in the logs when insert into command Job is finished. ## What changes were proposed in this pull request? ``As part of insert command in FileFormatWriter, a job context is created for handling the write operation , While initializing the job context using setupJob() API in HadoopMapReduceCommitProtocol , we set the jobid in the Jobcontext configuration.In FileFormatWriter since we are directly getting the jobId from the map reduce JobContext the job id will come as null while adding the log. As a solution we shall get the jobID from the configuration of the map reduce Jobcontext.`` ## How was this patch tested? Manually, verified the logs after the changes.  Closes #22572 from sujith71955/master_log_issue. Authored-by: s71955 <sujithchacko.2010@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 459700727fadf3f35a211eab2ffc8d68a4a1c39a) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 05 October 2018, 08:51:59 UTC |

| c9bb83a | Wenchen Fan | 04 October 2018, 12:15:21 UTC | [SPARK-25602][SQL] SparkPlan.getByteArrayRdd should not consume the input when not necessary ## What changes were proposed in this pull request? In `SparkPlan.getByteArrayRdd`, we should only call `it.hasNext` when the limit is not hit, as `iter.hasNext` may produce one row and buffer it, and cause wrong metrics. ## How was this patch tested? new tests Closes #22621 from cloud-fan/range. Authored-by: Wenchen Fan <wenchen@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 71c24aad36ae6b3f50447a019bf893490dcf1cf4) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 04 October 2018, 12:16:32 UTC |

| 0763b75 | hyukjinkwon | 04 October 2018, 01:36:23 UTC | [SPARK-25601][PYTHON] Register Grouped aggregate UDF Vectorized UDFs for SQL Statement ## What changes were proposed in this pull request? This PR proposes to register Grouped aggregate UDF Vectorized UDFs for SQL Statement, for instance: ```python from pyspark.sql.functions import pandas_udf, PandasUDFType pandas_udf("integer", PandasUDFType.GROUPED_AGG) def sum_udf(v): return v.sum() spark.udf.register("sum_udf", sum_udf) q = "SELECT v2, sum_udf(v1) FROM VALUES (3, 0), (2, 0), (1, 1) tbl(v1, v2) GROUP BY v2" spark.sql(q).show() ``` ``` +---+-----------+ | v2|sum_udf(v1)| +---+-----------+ | 1| 1| | 0| 5| +---+-----------+ ``` ## How was this patch tested? Manual test and unit test. Closes #22620 from HyukjinKwon/SPARK-25601. Authored-by: hyukjinkwon <gurwls223@apache.org> Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 04 October 2018, 01:43:42 UTC |

| 443d12d | Marco Gaido | 03 October 2018, 14:28:34 UTC | [SPARK-25538][SQL] Zero-out all bytes when writing decimal ## What changes were proposed in this pull request? In #20850 when writing non-null decimals, instead of zero-ing all the 16 allocated bytes, we zero-out only the padding bytes. Since we always allocate 16 bytes, if the number of bytes needed for a decimal is lower than 9, then this means that the bytes between 8 and 16 are not zero-ed. I see 2 solutions here: - we can zero-out all the bytes in advance as it was done before #20850 (safer solution IMHO); - we can allocate only the needed bytes (may be a bit more efficient in terms of memory used, but I have not investigated the feasibility of this option). Hence I propose here the first solution in order to fix the correctness issue. We can eventually switch to the second if we think is more efficient later. ## How was this patch tested? Running the test attached in the JIRA + added UT Closes #22602 from mgaido91/SPARK-25582. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit d7ae36a810bfcbedfe7360eb2cdbbc3ca970e4d0) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 03 October 2018, 14:28:48 UTC |

| ea4068a | Shahid | 02 October 2018, 15:05:09 UTC | [SPARK-25583][DOC] Add history-server related configuration in the documentation. ## What changes were proposed in this pull request? Add history-server related configuration in the documentation. Some of the history server related configurations were missing in the documentation.Like, 'spark.history.store.maxDiskUsage', 'spark.ui.liveUpdate.period' etc. ## How was this patch tested?    Closes #22601 from shahidki31/historyConf. Authored-by: Shahid <shahidki31@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit 71876633f3af706408355b5fb561b58dbc593360) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 02 October 2018, 15:06:32 UTC |

| ad7b3f6 | Sean Owen | 02 October 2018, 02:35:12 UTC | [SPARK-25578][BUILD] Update to Scala 2.12.7 ## What changes were proposed in this pull request? Update to Scala 2.12.7. See https://issues.apache.org/jira/browse/SPARK-25578 for why. ## How was this patch tested? Existing tests. Closes #22600 from srowen/SPARK-25578. Authored-by: Sean Owen <sean.owen@databricks.com> Signed-off-by: Sean Owen <sean.owen@databricks.com> (cherry picked from commit 5114db5781967c1e8046296905d97560187479fb) Signed-off-by: Sean Owen <sean.owen@databricks.com> | 02 October 2018, 02:35:26 UTC |

| 426c2bd | Aleksandr Koriagin | 01 October 2018, 09:18:45 UTC | [SPARK-23401][PYTHON][TESTS] Add more data types for PandasUDFTests ## What changes were proposed in this pull request? Add more data types for Pandas UDF Tests for PySpark SQL ## How was this patch tested? manual tests Closes #22568 from AlexanderKoryagin/new_types_for_pandas_udf_tests. Lead-authored-by: Aleksandr Koriagin <aleksandr_koriagin@epam.com> Co-authored-by: hyukjinkwon <gurwls223@apache.org> Co-authored-by: Alexander Koryagin <AlexanderKoryagin@users.noreply.github.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 30f5d0f2ddfe56266ea81e4255f9b4f373dab237) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 01 October 2018, 09:19:00 UTC |

| 82990e5 | seancxmao | 01 October 2018, 05:49:14 UTC | [SPARK-25453][SQL][TEST][.FFFFFFFFF] OracleIntegrationSuite IllegalArgumentException: Timestamp format must be yyyy-mm-dd hh:mm:ss ## What changes were proposed in this pull request? This PR aims to fix the failed test of `OracleIntegrationSuite`. ## How was this patch tested? Existing integration tests. Closes #22461 from seancxmao/SPARK-25453. Authored-by: seancxmao <seancxmao@gmail.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit 21f0b73dbcd94f9eea8cbc06a024b0e899edaf4c) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 01 October 2018, 05:49:27 UTC |

| 7b1094b | Marco Gaido | 01 October 2018, 05:08:04 UTC | [SPARK-25505][SQL][FOLLOWUP] Fix for attributes cosmetically different in Pivot clause ## What changes were proposed in this pull request? #22519 introduced a bug when the attributes in the pivot clause are cosmetically different from the output ones (eg. different case). In particular, the problem is that the PR used a `Set[Attribute]` instead of an `AttributeSet`. ## How was this patch tested? added UT Closes #22582 from mgaido91/SPARK-25505_followup. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit fb8f4c05657595e089b6812d97dbfee246fce06f) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 01 October 2018, 05:08:19 UTC |

| c886f05 | Prashant Sharma | 30 September 2018, 21:28:20 UTC | [SPARK-25543][K8S] Print debug message iff execIdsRemovedInThisRound is not empty. ## What changes were proposed in this pull request? Spurious logs like /sec. 2018-09-26 09:33:57 DEBUG ExecutorPodsLifecycleManager:58 - Removed executors with ids from Spark that were either found to be deleted or non-existent in the cluster. 2018-09-26 09:33:58 DEBUG ExecutorPodsLifecycleManager:58 - Removed executors with ids from Spark that were either found to be deleted or non-existent in the cluster. 2018-09-26 09:33:59 DEBUG ExecutorPodsLifecycleManager:58 - Removed executors with ids from Spark that were either found to be deleted or non-existent in the cluster. 2018-09-26 09:34:00 DEBUG ExecutorPodsLifecycleManager:58 - Removed executors with ids from Spark that were either found to be deleted or non-existent in the cluster. The fix is easy, first check if there are any removed executors, before producing the log message. ## How was this patch tested? Tested by manually deploying to a minikube cluster. Closes #22565 from ScrapCodes/spark-25543/k8s/debug-log-spurious-warning. Authored-by: Prashant Sharma <prashsh1@in.ibm.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit 4da541a5d23b039eb549dd849cf121bdc8676e59) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 30 September 2018, 21:28:39 UTC |

| 8e6fb47 | Darcy Shen | 30 September 2018, 14:00:23 UTC | [CORE][MINOR] Fix obvious error and compiling for Scala 2.12.7 ## What changes were proposed in this pull request? Fix an obvious error. ## How was this patch tested? Existing tests. Closes #22577 from sadhen/minor_fix. Authored-by: Darcy Shen <sadhen@zoho.com> Signed-off-by: Sean Owen <sean.owen@databricks.com> (cherry picked from commit 40e6ed89405828ff312eca0abd43cfba4b9185b2) Signed-off-by: Sean Owen <sean.owen@databricks.com> | 30 September 2018, 14:00:34 UTC |

| 6f510c6 | Shixiong Zhu | 30 September 2018, 01:10:04 UTC | [SPARK-25568][CORE] Continue to update the remaining accumulators when failing to update one accumulator ## What changes were proposed in this pull request? Since we don't fail a job when `AccumulatorV2.merge` fails, we should try to update the remaining accumulators so that they can still report correct values. ## How was this patch tested? The new unit test. Closes #22586 from zsxwing/SPARK-25568. Authored-by: Shixiong Zhu <zsxwing@gmail.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit b6b8a6632e2b6e5482aaf4bfa093700752a9df80) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 30 September 2018, 01:10:22 UTC |

| fef3027 | Felix Cheung | 29 September 2018, 21:48:32 UTC | [SPARK-25572][SPARKR] test only if not cran ## What changes were proposed in this pull request? CRAN doesn't seem to respect the system requirements as running tests - we have seen cases where SparkR is run on Java 10, which unfortunately Spark does not start on. For 2.4, lets attempt skipping all tests ## How was this patch tested? manual, jenkins, appveyor Author: Felix Cheung <felixcheung_m@hotmail.com> Closes #22589 from felixcheung/ralltests. (cherry picked from commit f4b138082ff91be74b0f5bbe19cdb90dd9e5f131) Signed-off-by: Felix Cheung <felixcheung@apache.org> | 29 September 2018, 21:48:51 UTC |

| a14306b | Liang-Chi Hsieh | 29 September 2018, 10:18:37 UTC | [SPARK-25262][DOC][FOLLOWUP] Fix link tags in html table ## What changes were proposed in this pull request? Markdown links are not working inside html table. We should use html link tag. ## How was this patch tested? Verified in IntelliJ IDEA's markdown editor and online markdown editor. Closes #22588 from viirya/SPARK-25262-followup. Authored-by: Liang-Chi Hsieh <viirya@gmail.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit dcb9a97f3e16d4645529ac619c3197fcba1c9806) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 29 September 2018, 10:18:52 UTC |

| ec2c17a | Dongjoon Hyun | 29 September 2018, 03:43:58 UTC | [SPARK-25570][SQL][TEST] Replace 2.3.1 with 2.3.2 in HiveExternalCatalogVersionsSuite ## What changes were proposed in this pull request? This PR aims to prevent test slowdowns at `HiveExternalCatalogVersionsSuite` by using the latest Apache Spark 2.3.2 link because the Apache mirrors will remove the old Spark 2.3.1 binaries eventually. `HiveExternalCatalogVersionsSuite` will not fail because [SPARK-24813](https://issues.apache.org/jira/browse/SPARK-24813) implements a fallback logic. However, it will cause many trials and fallbacks in all builds over `branch-2.3/branch-2.4/master`. We had better fix this issue. ## How was this patch tested? Pass the Jenkins with the updated version. Closes #22587 from dongjoon-hyun/SPARK-25570. Authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 1e437835e96c4417117f44c29eba5ebc0112926f) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 29 September 2018, 03:44:12 UTC |

| 7614313 | Liang-Chi Hsieh | 28 September 2018, 21:29:56 UTC | [SPARK-25542][CORE][TEST] Move flaky test in OpenHashMapSuite to OpenHashSetSuite and make it against OpenHashSet ## What changes were proposed in this pull request? The specified test in OpenHashMapSuite to test large items is somehow flaky to throw OOM. By considering the original work #6763 that added this test, the test can be against OpenHashSetSuite. And by doing this should be to save memory because OpenHashMap allocates two more arrays when growing the map/set. ## How was this patch tested? Existing tests. Closes #22569 from viirya/SPARK-25542. Authored-by: Liang-Chi Hsieh <viirya@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit b7d80349b0e367d78cab238e62c2ec353f0f12b3) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 28 September 2018, 21:30:12 UTC |

| 81391c2 | Dongjoon Hyun | 28 September 2018, 21:10:24 UTC | [SPARK-23285][DOC][FOLLOWUP] Fix missing markup tag ## What changes were proposed in this pull request? This adds a missing markup tag. This should go to `master/branch-2.4`. ## How was this patch tested? Manual via `SKIP_API=1 jekyll build`. Closes #22585 from dongjoon-hyun/SPARK-23285. Authored-by: Dongjoon Hyun <dongjoon@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit 0b33f08683a41f6f3a6ec02c327010c0722cc1d1) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 28 September 2018, 21:10:47 UTC |

| b2a1e2f | maryannxue | 28 September 2018, 07:09:06 UTC | [SPARK-25505][SQL] The output order of grouping columns in Pivot is different from the input order ## What changes were proposed in this pull request? The grouping columns from a Pivot query are inferred as "input columns - pivot columns - pivot aggregate columns", where input columns are the output of the child relation of Pivot. The grouping columns will be the leading columns in the pivot output and they should preserve the same order as specified by the input. For example, ``` SELECT * FROM ( SELECT course, earnings, "a" as a, "z" as z, "b" as b, "y" as y, "c" as c, "x" as x, "d" as d, "w" as w FROM courseSales ) PIVOT ( sum(earnings) FOR course IN ('dotNET', 'Java') ) ``` The output columns should be "a, z, b, y, c, x, d, w, ..." but now it is "a, b, c, d, w, x, y, z, ..." The fix is to use the child plan's `output` instead of `outputSet` so that the order can be preserved. ## How was this patch tested? Added UT. Closes #22519 from maryannxue/spark-25505. Authored-by: maryannxue <maryannxue@apache.org> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit e120a38c0cdfb569c9151bef4d53e98175da2b25) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 28 September 2018, 07:09:21 UTC |

| a43a082 | Shahid | 26 September 2018, 17:47:49 UTC | [SPARK-25533][CORE][WEBUI] AppSummary should hold the information about succeeded Jobs and completed stages only Currently, In the spark UI, when there are failed jobs or failed stages, display message for the completed jobs and completed stages are not consistent with the previous versions of spark. Reason is because, AppSummary holds the information about all the jobs and stages. But, In the below code, it checks against the completedJobs and completedStages. So, AppSummary should hold only successful jobs and stages. https://github.com/apache/spark/blob/66d29870c09e6050dd846336e596faaa8b0d14ad/core/src/main/scala/org/apache/spark/ui/jobs/AllJobsPage.scala#L306 https://github.com/apache/spark/blob/66d29870c09e6050dd846336e596faaa8b0d14ad/core/src/main/scala/org/apache/spark/ui/jobs/AllStagesPage.scala#L119 So, we should keep only completed jobs and stage information in the AppSummary, to make it consistent with Spark2.2 Test steps: bin/spark-shell ``` sc.parallelize(1 to 5, 5).collect() sc.parallelize(1 to 5, 2).map{ x => throw new RuntimeException("Fail")}.collect() ``` **Before fix:**   **After fix:**   Closes #22549 from shahidki31/SPARK-25533. Authored-by: Shahid <shahidki31@gmail.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com> (cherry picked from commit 5ee21661834e837d414bc20591982a092c0aece3) Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com> | 27 September 2018, 17:24:14 UTC |

| 0256f8a | Marcelo Vanzin | 27 September 2018, 16:26:50 UTC | [SPARK-25546][CORE] Don't cache value of EVENT_LOG_CALLSITE_LONG_FORM. Caching the value of that config means different instances of SparkEnv will always use whatever was the first value to be read. It also breaks tests that use RDDInfo outside of the scope of a SparkContext. Since this is not a performance sensitive area, there's no advantage in caching the config value. Closes #22558 from vanzin/SPARK-25546. Authored-by: Marcelo Vanzin <vanzin@cloudera.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit 5fd22d05363dd8c0e1b10f3822ccb71eb42f6db9) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 27 September 2018, 16:27:05 UTC |

| 659ecb5 | Wenchen Fan | 27 September 2018, 14:31:03 UTC | Preparing development version 2.4.1-SNAPSHOT | 27 September 2018, 14:31:03 UTC |

| 42f25f3 | Wenchen Fan | 27 September 2018, 14:30:59 UTC | Preparing Spark release v2.4.0-rc2 | 27 September 2018, 14:30:59 UTC |

| 3c78ea2 | Dilip Biswal | 27 September 2018, 07:04:59 UTC | [SPARK-25522][SQL] Improve type promotion for input arguments of elementAt function ## What changes were proposed in this pull request? In ElementAt, when first argument is MapType, we should coerce the key type and the second argument based on findTightestCommonType. This is not happening currently. We may produce wrong output as we will incorrectly downcast the right hand side double expression to int. ```SQL spark-sql> select element_at(map(1,"one", 2, "two"), 2.2); two ``` Also, when the first argument is ArrayType, the second argument should be an integer type or a smaller integral type that can be safely casted to an integer type. Currently we may do an unsafe cast. In the following case, we should fail with an error as 2.2 is not a integer index. But instead we down cast it to int currently and return a result instead. ```SQL spark-sql> select element_at(array(1,2), 1.24D); 1 ``` This PR also supports implicit cast between two MapTypes. I have followed similar logic that exists today to do implicit casts between two array types. ## How was this patch tested? Added new tests in DataFrameFunctionSuite, TypeCoercionSuite. Closes #22544 from dilipbiswal/SPARK-25522. Authored-by: Dilip Biswal <dbiswal@us.ibm.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit d03e0af80d7659f12821cc2442efaeaee94d3985) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 27 September 2018, 11:50:01 UTC |

| 53eb858 | Yuanjian Li | 27 September 2018, 07:13:18 UTC | [SPARK-25314][SQL] Fix Python UDF accessing attributes from both side of join in join conditions ## What changes were proposed in this pull request? Thanks for bahchis reporting this. It is more like a follow up work for #16581, this PR fix the scenario of Python UDF accessing attributes from both side of join in join condition. ## How was this patch tested? Add regression tests in PySpark and `BatchEvalPythonExecSuite`. Closes #22326 from xuanyuanking/SPARK-25314. Authored-by: Yuanjian Li <xyliyuanjian@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 2a8cbfddba2a59d144b32910c68c22d0199093fe) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 27 September 2018, 07:13:39 UTC |

| 0b4e581 | Wenchen Fan | 27 September 2018, 07:02:20 UTC | [SPARK-23715][SQL][DOC] improve document for from/to_utc_timestamp ## What changes were proposed in this pull request? We have an agreement that the behavior of `from/to_utc_timestamp` is corrected, although the function itself doesn't make much sense in Spark: https://issues.apache.org/jira/browse/SPARK-23715 This PR improves the document. ## How was this patch tested? N/A Closes #22543 from cloud-fan/doc. Authored-by: Wenchen Fan <wenchen@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit ff876137faba1802b66ecd483ba15f6ccd83ffc5) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 27 September 2018, 07:02:52 UTC |

| 0cf4c5b | 王小刚 | 27 September 2018, 05:02:05 UTC | [SPARK-25468][WEBUI] Highlight current page index in the spark UI ## What changes were proposed in this pull request? This PR is highlight current page index in the spark UI and history server UI, https://issues.apache.org/jira/browse/SPARK-25468 I have add the following code in webui.css ``` .paginate_button.active>a { color: #999999; text-decoration: underline; } ``` ## How was this patch tested? Manual tests for Chrome, Firefox and Safari Before modifying:  After modifying:  Closes #22516 from Adamyuanyuan/spark-adam-25468. Lead-authored-by: 王小刚 <wangxiaogang@chinatelecom.cn> Co-authored-by: Adam Wang <Adamyuanyuan@users.noreply.github.com> Signed-off-by: Sean Owen <sean.owen@databricks.com> (cherry picked from commit 8b727994edd27104d49c6d690f93c6858fb9e1fc) Signed-off-by: Sean Owen <sean.owen@databricks.com> | 27 September 2018, 05:02:29 UTC |

| 01c000b | hyukjinkwon | 27 September 2018, 04:38:14 UTC | Revert "[SPARK-25540][SQL][PYSPARK] Make HiveContext in PySpark behave as the same as Scala." This reverts commit 7656358adc39eb8eb881368ab5a066fbf86149c8. | 27 September 2018, 04:38:14 UTC |

| f12769e | Shahid | 27 September 2018, 04:10:39 UTC | [SPARK-25536][CORE] metric value for METRIC_OUTPUT_RECORDS_WRITTEN is incorrect ## What changes were proposed in this pull request? changed metric value of METRIC_OUTPUT_RECORDS_WRITTEN from 'task.metrics.inputMetrics.recordsRead' to 'task.metrics.outputMetrics.recordsWritten'. This bug was introduced in SPARK-22190. https://github.com/apache/spark/pull/19426 ## How was this patch tested? Existing tests Closes #22555 from shahidki31/SPARK-25536. Authored-by: Shahid <shahidki31@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit 5def10e61e49dba85f4d8b39c92bda15137990a2) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 27 September 2018, 04:14:13 UTC |

| 7656358 | Takuya UESHIN | 27 September 2018, 01:51:20 UTC | [SPARK-25540][SQL][PYSPARK] Make HiveContext in PySpark behave as the same as Scala. ## What changes were proposed in this pull request? In Scala, `HiveContext` sets a config `spark.sql.catalogImplementation` of the given `SparkContext` and then passes to `SparkSession.builder`. The `HiveContext` in PySpark should behave as the same as Scala. ## How was this patch tested? Existing tests. Closes #22552 from ueshin/issues/SPARK-25540/hive_context. Authored-by: Takuya UESHIN <ueshin@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit c3c45cbd76d91d591d98cf8411fcfd30079f5969) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 27 September 2018, 01:51:42 UTC |

| 2ff91f2 | Wenchen Fan | 27 September 2018, 00:47:05 UTC | [SPARK-25454][SQL] add a new config for picking minimum precision for integral literals ## What changes were proposed in this pull request? https://github.com/apache/spark/pull/20023 proposed to allow precision lose during decimal operations, to reduce the possibilities of overflow. This is a behavior change and is protected by the DECIMAL_OPERATIONS_ALLOW_PREC_LOSS config. However, that PR introduced another behavior change: pick a minimum precision for integral literals, which is not protected by a config. This PR add a new config for it: `spark.sql.literal.pickMinimumPrecision`. This can allow users to work around issue in SPARK-25454, which is caused by a long-standing bug of negative scale. ## How was this patch tested? a new test Closes #22494 from cloud-fan/decimal. Authored-by: Wenchen Fan <wenchen@databricks.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit d0990e3dfee752a6460a6360e1a773138364d774) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 27 September 2018, 00:47:18 UTC |

| 8d17200 | Reynold Xin | 26 September 2018, 17:15:16 UTC | [SPARK-24519][CORE] Compute SHUFFLE_MIN_NUM_PARTS_TO_HIGHLY_COMPRESS only once ## What changes were proposed in this pull request? Previously SPARK-24519 created a modifiable config SHUFFLE_MIN_NUM_PARTS_TO_HIGHLY_COMPRESS. However, the config is being parsed for every creation of MapStatus, which could be very expensive. Another problem with the previous approach is that it created the illusion that this can be changed dynamically at runtime, which was not true. This PR changes it so the config is computed only once. ## How was this patch tested? Removed a test case that's no longer valid. Closes #22521 from rxin/SPARK-24519. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit e702fb1d5218d062fcb8e618b92dad7958eb4062) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 26 September 2018, 17:22:50 UTC |

| dc60476 | Reza Safi | 26 September 2018, 16:29:58 UTC | [SPARK-25318] Add exception handling when wrapping the input stream during the the fetch or stage retry in response to a corrupted block SPARK-4105 provided a solution to block corruption issue by retrying the fetch or the stage. In that solution there is a step that wraps the input stream with compression and/or encryption. This step is prone to exceptions, but in the current code there is no exception handling for this step and this has caused confusion for the user. The confusion was that after SPARK-4105 the user expects to see either a fetchFailed exception or a warning about a corrupted block. However an exception during wrapping can fail the job without any of those. This change adds exception handling for the wrapping step and also adds a fetch retry if we experience a corruption during the wrapping step. The reason for adding the retry is that usually user won't experience the same failure after rerunning the job and so it seems reasonable try to fetch and wrap one more time instead of failing. Closes #22325 from rezasafi/localcorruption. Authored-by: Reza Safi <rezasafi@cloudera.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com> (cherry picked from commit bd2ae857d1c5f251056de38a7a40540986756b94) Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com> | 26 September 2018, 16:30:13 UTC |

| 9969827 | Rong Tang | 26 September 2018, 15:37:17 UTC | [SPARK-25509][CORE] Windows doesn't support POSIX permissions ## What changes were proposed in this pull request? SHS V2 cannot enabled in Windows, because windows doesn't support POSIX permission. ## How was this patch tested? test case fails in windows without this fix. org.apache.spark.deploy.history.HistoryServerDiskManagerSuite test("leasing space") SHS V2 cannot run successfully in Windows without this fix. java.lang.UnsupportedOperationException: 'posix:permissions' not supported as initial attribute at sun.nio.fs.WindowsSecurityDescriptor.fromAttribute(WindowsSecurityDescriptor.java:358) Closes #22520 from jianjianjiao/FixWindowsPermssionsIssue. Authored-by: Rong Tang <rotang@microsoft.com> Signed-off-by: Sean Owen <sean.owen@databricks.com> (cherry picked from commit a2ac5a72ccd2b14c8492d4a6da9e8b30f0f3c9b4) Signed-off-by: Sean Owen <sean.owen@databricks.com> | 26 September 2018, 15:37:27 UTC |

| d44b863 | seancxmao | 26 September 2018, 14:14:14 UTC | [SPARK-20937][DOCS] Describe spark.sql.parquet.writeLegacyFormat property in Spark SQL, DataFrames and Datasets Guide ## What changes were proposed in this pull request? Describe spark.sql.parquet.writeLegacyFormat property in Spark SQL, DataFrames and Datasets Guide. ## How was this patch tested? N/A Closes #22453 from seancxmao/SPARK-20937. Authored-by: seancxmao <seancxmao@gmail.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit cf5c9c4b550c3a8ed59d7ef9404f2689ea763fa9) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 26 September 2018, 14:14:27 UTC |

| 3f20305 | gatorsmile | 26 September 2018, 01:32:51 UTC | [SPARK-24324][PYTHON][FOLLOW-UP] Rename the Conf to spark.sql.legacy.execution.pandas.groupedMap.assignColumnsByName ## What changes were proposed in this pull request? Add the legacy prefix for spark.sql.execution.pandas.groupedMap.assignColumnsByPosition and rename it to spark.sql.legacy.execution.pandas.groupedMap.assignColumnsByName ## How was this patch tested? The existing tests. Closes #22540 from gatorsmile/renameAssignColumnsByPosition. Lead-authored-by: gatorsmile <gatorsmile@gmail.com> Co-authored-by: Hyukjin Kwon <gurwls223@gmail.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 8c2edf46d0f89e5ec54968218d89f30a3f8190bc) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 26 September 2018, 01:33:13 UTC |

| f91247f | Imran Rashid | 26 September 2018, 00:45:27 UTC | [SPARK-25422][CORE] Don't memory map blocks streamed to disk. After data has been streamed to disk, the buffers are inserted into the memory store in some cases (eg., with broadcast blocks). But broadcast code also disposes of those buffers when the data has been read, to ensure that we don't leave mapped buffers using up memory, which then leads to garbage data in the memory store. ## How was this patch tested? Ran the old failing test in a loop. Full tests on jenkins Closes #22546 from squito/SPARK-25422-master. Authored-by: Imran Rashid <irashid@cloudera.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 9bb3a0c67bd851b09ff4701ef1d280e2a77d791b) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 26 September 2018, 00:45:56 UTC |

| 544f86a | Shixiong Zhu | 25 September 2018, 18:42:27 UTC | [SPARK-25495][SS] FetchedData.reset should reset all fields ## What changes were proposed in this pull request? `FetchedData.reset` should reset `_nextOffsetInFetchedData` and `_offsetAfterPoll`. Otherwise it will cause inconsistent cached data and may make Kafka connector return wrong results. ## How was this patch tested? The new unit test. Closes #22507 from zsxwing/fix-kafka-reset. Lead-authored-by: Shixiong Zhu <zsxwing@gmail.com> Co-authored-by: Shixiong Zhu <shixiong@databricks.com> Signed-off-by: Shixiong Zhu <zsxwing@gmail.com> (cherry picked from commit 66d29870c09e6050dd846336e596faaa8b0d14ad) Signed-off-by: Shixiong Zhu <zsxwing@gmail.com> | 25 September 2018, 18:42:39 UTC |

| a709718 | Reynold Xin | 25 September 2018, 12:13:07 UTC | [SPARK-23907][SQL] Revert regr_* functions entirely ## What changes were proposed in this pull request? This patch reverts entirely all the regr_* functions added in SPARK-23907. These were added by mgaido91 (and proposed by gatorsmile) to improve compatibility with other database systems, without any actual use cases. However, they are very rarely used, and in Spark there are much better ways to compute these functions, due to Spark's flexibility in exposing real programming APIs. I'm going through all the APIs added in Spark 2.4 and I think we should revert these. If there are strong enough demands and more use cases, we can add them back in the future pretty easily. ## How was this patch tested? Reverted test cases also. Closes #22541 from rxin/SPARK-23907. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 9cbd001e2476cd06aa0bcfcc77a21a9077d5797a) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 25 September 2018, 12:13:22 UTC |

| 4ca4ef7 | Dilip Biswal | 25 September 2018, 04:05:04 UTC | [SPARK-25519][SQL] ArrayRemove function may return incorrect result when right expression is implicitly downcasted. ## What changes were proposed in this pull request? In ArrayRemove, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result. Example : ```SQL spark-sql> select array_remove(array(1,2,3), 1.23D); [2,3] ``` ```SQL spark-sql> select array_remove(array(1,2,3), 'foo'); NULL ``` We should safely coerce both left and right hand side expressions. ## How was this patch tested? Added tests in DataFrameFunctionsSuite Closes #22542 from dilipbiswal/SPARK-25519. Authored-by: Dilip Biswal <dbiswal@us.ibm.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 7d8f5b62c57c9e2903edd305e8b9c5400652fdb0) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 25 September 2018, 04:05:37 UTC |

| e4c03e8 | Shahid | 25 September 2018, 03:03:52 UTC | [SPARK-25503][CORE][WEBUI] Total task message in stage page is ambiguous ## What changes were proposed in this pull request? Test steps : 1) bin/spark-shell --conf spark.ui.retainedTasks=10 2) val rdd = sc.parallelize(1 to 1000, 1000) 3) rdd.count Stage page tab in the UI will display 10 tasks, but display message is wrong. It should reverse. **Before fix :**  **After fix**  ## How was this patch tested? Manually tested Closes #22525 from shahidki31/SparkUI. Authored-by: Shahid <shahidki31@gmail.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> (cherry picked from commit 615792da42b3ee3c5f623c869fada17a3aa92884) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 25 September 2018, 03:04:26 UTC |

| ffc081c | Shahid | 24 September 2018, 21:17:42 UTC | [SPARK-25502][CORE][WEBUI] Empty Page when page number exceeds the reatinedTask size. ## What changes were proposed in this pull request? Test steps : 1) bin/spark-shell --conf spark.ui.retainedTasks=200 ``` val rdd = sc.parallelize(1 to 1000, 1000) rdd.count ``` Stage tab in the UI will display 10 pages with 100 tasks per page. But number of retained tasks is only 200. So, from the 3rd page onwards will display nothing. We have to calculate total pages based on the number of tasks need display in the UI. **Before fix:**  **After fix:**  ## How was this patch tested? Manually tested Closes #22526 from shahidki31/SPARK-25502. Authored-by: Shahid <shahidki31@gmail.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com> (cherry picked from commit 3ce2e008ec1bf70adc5a4b356e09a469e94af803) Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com> | 24 September 2018, 21:18:03 UTC |

| ec38428 | hyukjinkwon | 24 September 2018, 15:49:19 UTC | [SPARK-25460][BRANCH-2.4][SS] DataSourceV2: SS sources do not respect SessionConfigSupport ## What changes were proposed in this pull request? This PR proposes to backport SPARK-25460 to branch-2.4: This PR proposes to respect `SessionConfigSupport` in SS datasources as well. Currently these are only respected in batch sources: https://github.com/apache/spark/blob/e06da95cd9423f55cdb154a2778b0bddf7be984c/sql/core/src/main/scala/org/apache/spark/sql/DataFrameReader.scala#L198-L203 https://github.com/apache/spark/blob/e06da95cd9423f55cdb154a2778b0bddf7be984c/sql/core/src/main/scala/org/apache/spark/sql/DataFrameWriter.scala#L244-L249 If a developer makes a datasource V2 that supports both structured streaming and batch jobs, batch jobs respect a specific configuration, let's say, URL to connect and fetch data (which end users might not be aware of); however, structured streaming ends up with not supporting this (and should explicitly be set into options). ## How was this patch tested? Unit tests were added. Closes #22529 from HyukjinKwon/SPARK-25460-backport. Authored-by: hyukjinkwon <gurwls223@apache.org> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 24 September 2018, 15:49:19 UTC |

| 51d5378 | Dilip Biswal | 24 September 2018, 13:37:51 UTC | [SPARK-25416][SQL] ArrayPosition function may return incorrect result when right expression is implicitly down casted ## What changes were proposed in this pull request? In ArrayPosition, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result. Example : ```SQL spark-sql> select array_position(array(1), 1.34); 1 ``` ```SQL spark-sql> select array_position(array(1), 'foo'); null ``` We should safely coerce both left and right hand side expressions. ## How was this patch tested? Added tests in DataFrameFunctionsSuite Closes #22407 from dilipbiswal/SPARK-25416. Authored-by: Dilip Biswal <dbiswal@us.ibm.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit bb49661e192eed78a8a306deffd83c73bd4a9eff) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 24 September 2018, 13:43:34 UTC |

| 13bc58d | Stan Zhai | 24 September 2018, 13:33:12 UTC | [SPARK-21318][SQL] Improve exception message thrown by `lookupFunction` ## What changes were proposed in this pull request? The function actually exists in current selected database, and it's failed to init during `lookupFunciton`, but the exception message is: ``` This function is neither a registered temporary function nor a permanent function registered in the database 'default'. ``` This is not conducive to positioning problems. This PR fix the problem. ## How was this patch tested? new test case + manual tests Closes #18544 from stanzhai/fix-udf-error-message. Authored-by: Stan Zhai <mail@stanzhai.site> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 804515f821086ea685815d3c8eff42d76b7d9e4e) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 24 September 2018, 13:33:38 UTC |

| 36e7c8f | hyukjinkwon | 24 September 2018, 11:25:02 UTC | [SPARKR] Match pyspark features in SparkR communication protocol | 24 September 2018, 11:28:31 UTC |

| c64e750 | gatorsmile | 23 September 2018, 02:16:33 UTC | [MINOR][PYSPARK] Always Close the tempFile in _serialize_to_jvm ## What changes were proposed in this pull request? Always close the tempFile after `serializer.dump_stream(data, tempFile)` in _serialize_to_jvm ## How was this patch tested? N/A Closes #22523 from gatorsmile/fixMinor. Authored-by: gatorsmile <gatorsmile@gmail.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 23 September 2018, 02:18:00 UTC |

| 1303eb5 | WeichenXu | 21 September 2018, 20:08:01 UTC | [SPARK-25321][ML] Fix local LDA model constructor ## What changes were proposed in this pull request? change back the constructor to: ``` class LocalLDAModel private[ml] ( uid: String, vocabSize: Int, private[clustering] val oldLocalModel : OldLocalLDAModel, sparkSession: SparkSession) ``` Although it is marked `private[ml]`, it is used in `mleap` and the master change breaks `mleap` building. See mleap code [here](https://github.com/combust/mleap/blob/c7860af328d519cf56441b4a7cd8e6ec9d9fee59/mleap-spark/src/main/scala/org/apache/spark/ml/bundle/ops/clustering/LDAModelOp.scala#L57) ## How was this patch tested? Manual. Closes #22510 from WeichenXu123/LDA_fix. Authored-by: WeichenXu <weichen.xu@databricks.com> Signed-off-by: Xiangrui Meng <meng@databricks.com> (cherry picked from commit 40edab209bdefe793b59b650099cea026c244484) Signed-off-by: Xiangrui Meng <meng@databricks.com> | 21 September 2018, 20:08:11 UTC |

| 138a631 | WeichenXu | 21 September 2018, 20:05:24 UTC | [SPARK-25321][ML] Revert SPARK-14681 to avoid API breaking change ## What changes were proposed in this pull request? Revert SPARK-14681 to avoid API breaking change. PR [SPARK-14681] will break mleap. ## How was this patch tested? N/A Closes #22492 from WeichenXu123/revert_tree_change. Authored-by: WeichenXu <weichen.xu@databricks.com> Signed-off-by: Xiangrui Meng <meng@databricks.com> | 21 September 2018, 20:05:24 UTC |

| ce66361 | Reynold Xin | 21 September 2018, 16:45:41 UTC | [SPARK-19724][SQL] allowCreatingManagedTableUsingNonemptyLocation should have legacy prefix One more legacy config to go ... Closes #22515 from rxin/allowCreatingManagedTableUsingNonemptyLocation. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit 4a11209539130c6a075119bf87c5ad854d42978e) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 21 September 2018, 16:46:03 UTC |

| 604828e | Marek Novotny | 21 September 2018, 09:16:54 UTC | [SPARK-25469][SQL] Eval methods of Concat, Reverse and ElementAt should use pattern matching only once ## What changes were proposed in this pull request? The PR proposes to avoid usage of pattern matching for each call of ```eval``` method within: - ```Concat``` - ```Reverse``` - ```ElementAt``` ## How was this patch tested? Run the existing tests for ```Concat```, ```Reverse``` and ```ElementAt``` expression classes. Closes #22471 from mn-mikke/SPARK-25470. Authored-by: Marek Novotny <mn.mikke@gmail.com> Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org> (cherry picked from commit 2c9d8f56c71093faf152ca7136c5fcc4a7b2a95f) Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org> | 21 September 2018, 09:30:32 UTC |

| e425462 | Reynold Xin | 21 September 2018, 06:27:14 UTC | [SPARK-23549][SQL] Rename config spark.sql.legacy.compareDateTimestampInTimestamp ## What changes were proposed in this pull request? See title. Makes our legacy backward compatibility configs more consistent. ## How was this patch tested? Make sure all references have been updated: ``` > git grep compareDateTimestampInTimestamp docs/sql-programming-guide.md: - Since Spark 2.4, Spark compares a DATE type with a TIMESTAMP type after promotes both sides to TIMESTAMP. To set `false` to `spark.sql.legacy.compareDateTimestampInTimestamp` restores the previous behavior. This option will be removed in Spark 3.0. sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/TypeCoercion.scala: // if conf.compareDateTimestampInTimestamp is true sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/TypeCoercion.scala: => if (conf.compareDateTimestampInTimestamp) Some(TimestampType) else Some(StringType) sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/TypeCoercion.scala: => if (conf.compareDateTimestampInTimestamp) Some(TimestampType) else Some(StringType) sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala: buildConf("spark.sql.legacy.compareDateTimestampInTimestamp") sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala: def compareDateTimestampInTimestamp : Boolean = getConf(COMPARE_DATE_TIMESTAMP_IN_TIMESTAMP) sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/analysis/TypeCoercionSuite.scala: "spark.sql.legacy.compareDateTimestampInTimestamp" -> convertToTS.toString) { ``` Closes #22508 from rxin/SPARK-23549. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 411ecc365ea62aef7a29d8764e783e6a58dbb1d5) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 21 September 2018, 06:28:09 UTC |

| aff6aed | Reynold Xin | 21 September 2018, 06:17:34 UTC | [SPARK-25384][SQL] Clarify fromJsonForceNullableSchema will be removed in Spark 3.0 See above. This should go into the 2.4 release. Closes #22509 from rxin/SPARK-25384. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit fb3276a54a2b7339e5e0fb62fb01cbefcc330c8b) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 21 September 2018, 06:18:00 UTC |

| 5d74449 | gatorsmile | 21 September 2018, 02:39:45 UTC | Revert "[SPARK-23715][SQL] the input of to/from_utc_timestamp can not have timezone ## What changes were proposed in this pull request? This reverts commit 417ad92502e714da71552f64d0e1257d2fd5d3d0. We decided to keep the current behaviors unchanged and will consider whether we will deprecate the these functions in 3.0. For more details, see the discussion in https://issues.apache.org/jira/browse/SPARK-23715 ## How was this patch tested? The existing tests. Closes #22505 from gatorsmile/revertSpark-23715. Authored-by: gatorsmile <gatorsmile@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 5d25e154408f71d24c4829165a16014fdacdd209) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 21 September 2018, 02:40:13 UTC |

| 51f3659 | Gengliang Wang | 21 September 2018, 00:41:24 UTC | [SPARK-24777][SQL] Add write benchmark for AVRO ## What changes were proposed in this pull request? Refactor `DataSourceWriteBenchmark` and add write benchmark for AVRO. ## How was this patch tested? Build and run the benchmark. Closes #22451 from gengliangwang/avroWriteBenchmark. Authored-by: Gengliang Wang <gengliang.wang@databricks.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit 950ab79957fc0cdc2dafac94765787e87ece9e74) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 21 September 2018, 00:41:37 UTC |

| 43c62e7 | Nihar Sheth | 20 September 2018, 18:52:20 UTC | [SPARK-24918][CORE] Executor Plugin API ## What changes were proposed in this pull request? A continuation of squito's executor plugin task. By his request I took his code and added testing and moved the plugin initialization to a separate thread. Executor plugins now run on one separate thread, so the executor does not wait on them. Added testing. ## How was this patch tested? Added test cases that test using a sample plugin. Closes #22192 from NiharS/executorPlugin. Lead-authored-by: Nihar Sheth <niharrsheth@gmail.com> Co-authored-by: NiharS <niharrsheth@gmail.com> Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com> (cherry picked from commit 2f51e72356babac703cc20a531b4dcc7712f34af) Signed-off-by: Marcelo Vanzin <vanzin@cloudera.com> | 20 September 2018, 18:52:31 UTC |

| c67c597 | maryannxue | 20 September 2018, 17:00:28 UTC | [SPARK-25450][SQL] PushProjectThroughUnion rule uses the same exprId for project expressions in each Union child, causing mistakes in constant propagation ## What changes were proposed in this pull request? The problem was cause by the PushProjectThroughUnion rule, which, when creating new Project for each child of Union, uses the same exprId for expressions of the same position. This is wrong because, for each child of Union, the expressions are all independent, and it can lead to a wrong result if other rules like FoldablePropagation kicks in, taking two different expressions as the same. This fix is to create new expressions in the new Project for each child of Union. ## How was this patch tested? Added UT. Closes #22447 from maryannxue/push-project-thru-union-bug. Authored-by: maryannxue <maryannxue@apache.org> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit 88446b6ad19371f15d06ef67052f6c1a8072c04a) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 20 September 2018, 17:00:42 UTC |

| fc03672 | hyukjinkwon | 20 September 2018, 16:41:42 UTC | [MINOR][PYTHON] Use a helper in `PythonUtils` instead of direct accessing Scala package ## What changes were proposed in this pull request? This PR proposes to use add a helper in `PythonUtils` instead of direct accessing Scala package. ## How was this patch tested? Jenkins tests. Closes #22483 from HyukjinKwon/minor-refactoring. Authored-by: hyukjinkwon <gurwls223@apache.org> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 88e7e87bd5c052e10f52d4bb97a9d78f5b524128) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 20 September 2018, 16:42:17 UTC |

| 78dd1d8 | Dilip Biswal | 20 September 2018, 12:33:44 UTC | [SPARK-25417][SQL] ArrayContains function may return incorrect result when right expression is implicitly down casted ## What changes were proposed in this pull request? In ArrayContains, we currently cast the right hand side expression to match the element type of the left hand side Array. This may result in down casting and may return wrong result or questionable result. Example : ```SQL spark-sql> select array_contains(array(1), 1.34); true ``` ```SQL spark-sql> select array_contains(array(1), 'foo'); null ``` We should safely coerce both left and right hand side expressions. ## How was this patch tested? Added tests in DataFrameFunctionsSuite Closes #22408 from dilipbiswal/SPARK-25417. Authored-by: Dilip Biswal <dbiswal@us.ibm.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 67f2c6a55425d0f38e26caaf7e0b665d978d0a68) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 20 September 2018, 12:34:04 UTC |

| b3bdfd7 | Liang-Chi Hsieh | 20 September 2018, 12:18:31 UTC | Revert [SPARK-19355][SPARK-25352] ## What changes were proposed in this pull request? This goes to revert sequential PRs based on some discussion and comments at https://github.com/apache/spark/pull/16677#issuecomment-422650759. #22344 #22330 #22239 #16677 ## How was this patch tested? Existing tests. Closes #22481 from viirya/revert-SPARK-19355-1. Authored-by: Liang-Chi Hsieh <viirya@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 89671a27e783d77d4bfaec3d422cc8dd468ef04c) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 20 September 2018, 12:18:58 UTC |

| e07042a | hyukjinkwon | 20 September 2018, 07:03:16 UTC | [MINOR][PYTHON][TEST] Use collect() instead of show() to make the output silent ## What changes were proposed in this pull request? This PR replace an effective `show()` to `collect()` to make the output silent. **Before:** ``` test_simple_udt_in_df (pyspark.sql.tests.SQLTests) ... +---+----------+ |key| val| +---+----------+ | 0|[0.0, 0.0]| | 1|[1.0, 1.0]| | 2|[2.0, 2.0]| | 0|[3.0, 3.0]| | 1|[4.0, 4.0]| | 2|[5.0, 5.0]| | 0|[6.0, 6.0]| | 1|[7.0, 7.0]| | 2|[8.0, 8.0]| | 0|[9.0, 9.0]| +---+----------+ ``` **After:** ``` test_simple_udt_in_df (pyspark.sql.tests.SQLTests) ... ok ``` ## How was this patch tested? Manually tested. Closes #22479 from HyukjinKwon/minor-udf-test. Authored-by: hyukjinkwon <gurwls223@apache.org> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 7ff5386ed934190344b2cda1069bde4bc68a3e63) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 20 September 2018, 07:03:34 UTC |

| dfcff38 | Reynold Xin | 20 September 2018, 04:23:35 UTC | [SPARK-4502][SQL] Rename to spark.sql.optimizer.nestedSchemaPruning.enabled ## What changes were proposed in this pull request? This patch adds an "optimizer" prefix to nested schema pruning. ## How was this patch tested? Should be covered by existing tests. Closes #22475 from rxin/SPARK-4502. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit 76399d75e23f2c7d6c2a1fb77a4387c5e15c809b) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 20 September 2018, 04:23:49 UTC |

| 06efed2 | Marco Gaido | 20 September 2018, 02:10:20 UTC | [SPARK-24341][FOLLOWUP][DOCS] Add migration note for IN subqueries behavior ## What changes were proposed in this pull request? The PR updates the migration guide in order to explain the changes introduced in the behavior of the IN operator with subqueries, in particular, the improved handling of struct attributes in these situations. ## How was this patch tested? NA Closes #22469 from mgaido91/SPARK-24341_followup. Authored-by: Marco Gaido <marcogaido91@gmail.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 8aae49afc7997aa1da61029409ef6d8ce0ab256a) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 20 September 2018, 02:10:36 UTC |

| 535bf1c | Reynold Xin | 20 September 2018, 01:51:20 UTC | [SPARK-24157][SS][FOLLOWUP] Rename to spark.sql.streaming.noDataMicroBatches.enabled ## What changes were proposed in this pull request? This patch changes the config option `spark.sql.streaming.noDataMicroBatchesEnabled` to `spark.sql.streaming.noDataMicroBatches.enabled` to be more consistent with rest of the configs. Unfortunately there is one streaming config called `spark.sql.streaming.metricsEnabled`. For that one we should just use a fallback config and change it in a separate patch. ## How was this patch tested? Made sure no other references to this config are in the code base: ``` > git grep "noDataMicro" sql/catalyst/src/main/scala/org/apache/spark/sql/internal/SQLConf.scala: buildConf("spark.sql.streaming.noDataMicroBatches.enabled") ``` Closes #22476 from rxin/SPARK-24157. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: Reynold Xin <rxin@databricks.com> (cherry picked from commit 936c920347e196381b48bc3656ca81a06f2ff46d) Signed-off-by: Reynold Xin <rxin@databricks.com> | 20 September 2018, 01:51:31 UTC |

| 99ae693 | Bryan Cutler | 20 September 2018, 01:29:29 UTC | [SPARK-25471][PYTHON][TEST] Fix pyspark-sql test error when using Python 3.6 and Pandas 0.23 ## What changes were proposed in this pull request? Fix test that constructs a Pandas DataFrame by specifying the column order. Previously this test assumed the columns would be sorted alphabetically, however when using Python 3.6 with Pandas 0.23 or higher, the original column order is maintained. This causes the columns to get mixed up and the test errors. Manually tested with `python/run-tests` using Python 3.6.6 and Pandas 0.23.4 Closes #22477 from BryanCutler/pyspark-tests-py36-pd23-SPARK-25471. Authored-by: Bryan Cutler <cutlerb@gmail.com> Signed-off-by: hyukjinkwon <gurwls223@apache.org> (cherry picked from commit 90e3955f384ca07bdf24faa6cdb60ded944cf0d8) Signed-off-by: hyukjinkwon <gurwls223@apache.org> | 20 September 2018, 01:29:49 UTC |

| a9a8d3a | Maxim Gekk | 19 September 2018, 23:53:26 UTC | [SPARK-25425][SQL][BACKPORT-2.4] Extra options should override session options in DataSource V2 ## What changes were proposed in this pull request? In the PR, I propose overriding session options by extra options in DataSource V2. Extra options are more specific and set via `.option()`, and should overwrite more generic session options. ## How was this patch tested? Added tests for read and write paths. Closes #22474 from MaxGekk/session-options-2.4. Authored-by: Maxim Gekk <maxim.gekk@databricks.com> Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 19 September 2018, 23:53:26 UTC |

| 9031c78 | Ilan Filonenko | 19 September 2018, 22:37:56 UTC | [SPARK-25021][K8S][BACKPORT] Add spark.executor.pyspark.memory limit for K8S ## What changes were proposed in this pull request? Add spark.executor.pyspark.memory limit for K8S [BACKPORT] ## How was this patch tested? Unit and Integration tests Closes #22376 from ifilonenko/SPARK-25021-2.4. Authored-by: Ilan Filonenko <if56@cornell.edu> Signed-off-by: Holden Karau <holden@pigscanfly.ca> | 19 September 2018, 22:37:56 UTC |

| 83a75a8 | WeichenXu | 19 September 2018, 22:16:20 UTC | [SPARK-22666][ML][FOLLOW-UP] Improve testcase to tolerate different schema representation ## What changes were proposed in this pull request? Improve testcase "image datasource test: read non image" to tolerate different schema representation. Because file:/path and file:///path are both valid URI-ifications so in some environment the testcase will fail. ## How was this patch tested? Manual. Closes #22449 from WeichenXu123/image_url. Authored-by: WeichenXu <weichen.xu@databricks.com> Signed-off-by: Xiangrui Meng <meng@databricks.com> (cherry picked from commit 6f681d42964884d19bf22deb614550d712223117) Signed-off-by: Xiangrui Meng <meng@databricks.com> | 19 September 2018, 22:16:30 UTC |

| 9fefb47 | Dongjoon Hyun | 19 September 2018, 21:33:40 UTC | Revert "[SPARK-23173][SQL] rename spark.sql.fromJsonForceNullableSchema" This reverts commit 6c7db7fd1ced1d143b1389d09990a620fc16be46. (cherry picked from commit cb1b55cf771018f1560f6b173cdd7c6ca8061bc7) Signed-off-by: Dongjoon Hyun <dongjoon@apache.org> | 19 September 2018, 21:38:21 UTC |

| 538ae62 | Gengliang Wang | 19 September 2018, 10:30:46 UTC | [SPARK-25445][BUILD][FOLLOWUP] Resolve issues in release-build.sh for publishing scala-2.12 build ## What changes were proposed in this pull request? This is a follow up for #22441. 1. Remove flag "-Pkafka-0-8" for Scala 2.12 build. 2. Clean up the script, simpler logic. 3. Switch to Scala version to 2.11 before script exit. ## How was this patch tested? Manual test. Closes #22454 from gengliangwang/revise_release_build. Authored-by: Gengliang Wang <gengliang.wang@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 5534a3a58e4025624fbad527dd129acb8025f25a) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 19 September 2018, 10:31:09 UTC |

| f11f445 | Reynold Xin | 19 September 2018, 05:41:27 UTC | [SPARK-24626] Add statistics prefix to parallelFileListingInStatsComputation ## What changes were proposed in this pull request? To be more consistent with other statistics based configs. ## How was this patch tested? N/A - straightforward rename of config option. Used `git grep` to make sure there are no mention of it. Closes #22457 from rxin/SPARK-24626. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit 4193c7623b92765adaee539e723328ddc9048c09) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 19 September 2018, 05:41:39 UTC |

| 00ede12 | Reynold Xin | 19 September 2018, 05:39:29 UTC | [SPARK-23173][SQL] rename spark.sql.fromJsonForceNullableSchema ## What changes were proposed in this pull request? `spark.sql.fromJsonForceNullableSchema` -> `spark.sql.function.fromJson.forceNullable` ## How was this patch tested? Made sure there are no more references to `spark.sql.fromJsonForceNullableSchema`. Closes #22459 from rxin/SPARK-23173. Authored-by: Reynold Xin <rxin@databricks.com> Signed-off-by: gatorsmile <gatorsmile@gmail.com> (cherry picked from commit 6c7db7fd1ced1d143b1389d09990a620fc16be46) Signed-off-by: gatorsmile <gatorsmile@gmail.com> | 19 September 2018, 05:39:43 UTC |

| ba8560a | Santiago Saavedra | 19 September 2018, 05:08:50 UTC | [SPARK-23200] Reset Kubernetes-specific config on Checkpoint restore Several configuration parameters related to Kubernetes need to be reset, as they are changed with each invokation of spark-submit and thus prevents recovery of Spark Streaming tasks. ## What changes were proposed in this pull request? When using the Kubernetes cluster-manager and spawning a Streaming workload, it is important to reset many spark.kubernetes.* properties that are generated by spark-submit but which would get rewritten when restoring a Checkpoint. This is so, because the spark-submit codepath creates Kubernetes resources, such as a ConfigMap, a Secret and other variables, which have an autogenerated name and the previous one will not resolve anymore. In short, this change enables checkpoint restoration for streaming workloads, and thus enables Spark Streaming workloads in Kubernetes, which were not possible to restore from a checkpoint before if the workload went down. ## How was this patch tested? This patch needs would benefit from testing in different k8s clusters. This is similar to the YARN related code for resetting a Spark Streaming workload, but for the Kubernetes scheduler. This PR removes the initcontainers properties that existed before because they are now removed in master. For a previous discussion, see the non-rebased work at: apache-spark-on-k8s#516 Closes #22392 from ssaavedra/fix-checkpointing-master. Authored-by: Santiago Saavedra <santiagosaavedra@gmail.com> Signed-off-by: Yinan Li <ynli@google.com> (cherry picked from commit 497f00f62b3ddd1f40507fdfe10f30cd9effb6cf) Signed-off-by: Yinan Li <ynli@google.com> | 19 September 2018, 05:09:10 UTC |

| 76514a0 | Imran Rashid | 18 September 2018, 21:33:37 UTC | [SPARK-25456][SQL][TEST] Fix PythonForeachWriterSuite PythonForeachWriterSuite was failing because RowQueue now needs to have a handle on a SparkEnv with a SerializerManager, so added a mock env with a serializer manager. Also fixed a typo in the `finally` that was hiding the real exception. Tested PythonForeachWriterSuite locally, full tests via jenkins. Closes #22452 from squito/SPARK-25456. Authored-by: Imran Rashid <irashid@cloudera.com> Signed-off-by: Imran Rashid <irashid@cloudera.com> (cherry picked from commit a6f37b0742d87d5c8ee3e134999d665e5719e822) Signed-off-by: Imran Rashid <irashid@cloudera.com> | 18 September 2018, 21:33:49 UTC |

| 67f2cb6 | Ilan Filonenko | 18 September 2018, 18:43:35 UTC | [SPARK-25291][K8S] Fixing Flakiness of Executor Pod tests ## What changes were proposed in this pull request? Added fix to flakiness that was present in PySpark tests w.r.t Executors not being tested. Important fix to executorConf which was failing tests when executors *were* tested ## How was this patch tested? Unit and Integration tests Closes #22415 from ifilonenko/SPARK-25291. Authored-by: Ilan Filonenko <if56@cornell.edu> Signed-off-by: Yinan Li <ynli@google.com> (cherry picked from commit 123f0041d534f28e14343aafb4e5cec19dde14ad) Signed-off-by: Yinan Li <ynli@google.com> | 18 September 2018, 18:44:06 UTC |

| 8a2992e | Yuming Wang | 18 September 2018, 15:38:55 UTC | [SPARK-19550][DOC][FOLLOW-UP] Update tuning.md to use JDK8 ## What changes were proposed in this pull request? Update `tuning.md` and `rdd-programming-guide.md` to use JDK8. ## How was this patch tested? manual tests Closes #22446 from wangyum/java8. Authored-by: Yuming Wang <yumwang@ebay.com> Signed-off-by: Sean Owen <sean.owen@databricks.com> (cherry picked from commit 182da81e9e75ac1658a39014beb90e60495bf544) Signed-off-by: Sean Owen <sean.owen@databricks.com> | 18 September 2018, 15:39:04 UTC |

| cc3fbea | Wenchen Fan | 18 September 2018, 14:29:00 UTC | [SPARK-25445][BUILD] the release script should be able to publish a scala-2.12 build ## What changes were proposed in this pull request? update the package and publish steps, to support scala 2.12 ## How was this patch tested? manual test Closes #22441 from cloud-fan/scala. Authored-by: Wenchen Fan <wenchen@databricks.com> Signed-off-by: Wenchen Fan <wenchen@databricks.com> (cherry picked from commit 1c0423b28705eb96237c0cb4e90f49305c64a997) Signed-off-by: Wenchen Fan <wenchen@databricks.com> | 18 September 2018, 14:29:26 UTC |